An extremely large cosmological simulation—among the five most extensive ever conducted—is set to run on Mira this fall and exemplifies the scope of problems addressed on the leadership-class supercomputer at the U.S. Department of Energy’s (DOE’s) Argonne National Laboratory.

Argonne physicist and computational scientist Katrin Heitmann leads the project. Heitmann was among the first to leverage Mira’s capabilities when, in 2013, the IBM Blue Gene/Q system went online at the Argonne Leadership Computing Facility (ALCF), a DOE Office of Science User Facility. Among the largest cosmological simulations ever performed at the time, the Outer Rim Simulation she and her colleagues carried out enabled further scientific research for many years.

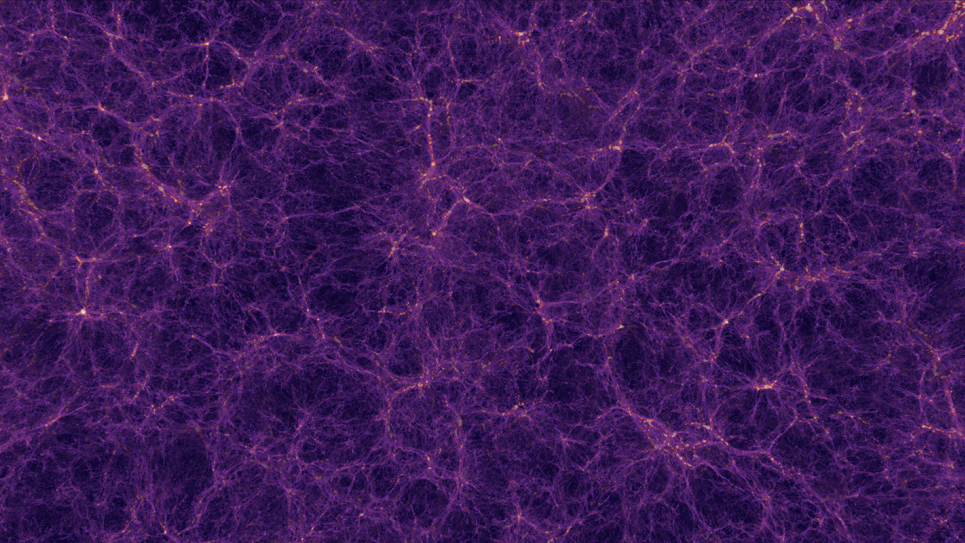

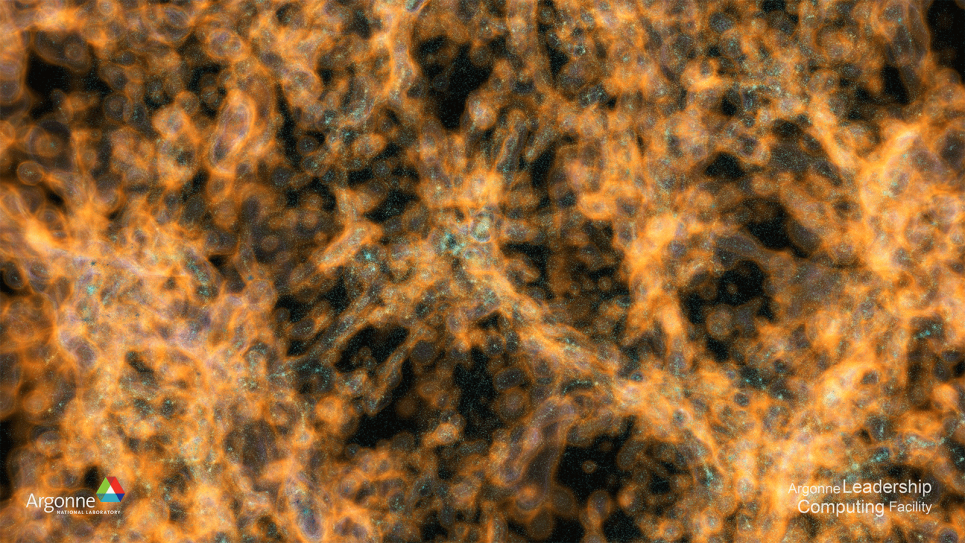

For the new effort, Heitmann has been allocated approximately 800 million core-hours to perform a simulation that reflects cutting-edge observational advances from satellites and telescopes and will form the basis for sky maps used by numerous surveys. Evolving a massive number of particles, the simulation is designed to help resolve mysteries of dark energy and dark matter.

“By transforming this simulation into a synthetic sky that closely mimics observational data at different wavelengths, this work can enable a large number of science projects throughout the research community,” Heitmann said. “But it presents us with a big challenge.” That is, in order to generate synthetic skies across different wavelengths, the team must extract relevant information and perform analysis either on the fly or after the fact in post-processing. Post-processing requires the storage of massive amounts of data—so much, in fact, that merely reading the data becomes extremely computationally expensive.

Since Mira was launched, Heitmann and her team have implemented in their Hardware/Hybrid Accelerated Cosmology Code (HACC) more sophisticated analysis tools for on-the-fly processing. “Moreover, compared to the Outer Rim Simulation, we’ve effected three major improvements,” she said. “First, our cosmological model has been updated so that we can run a simulation with the best possible observational inputs. Second, as we’re aiming for a full-machine run, volume will be increased, leading to better statistics. Most importantly, we set up several new analysis routines that will allow us to generate synthetic skies for a wide range of surveys, in turn allowing us to study a wide range of science problems.”

The team’s simulation will address numerous fundamental questions in cosmology and is essential for enabling the refinement of existing predictive tools and aid the development of new models, impacting both ongoing and upcoming cosmological surveys, including the Dark Energy Spectroscopic Instrument (DESI), the Large Synoptic Survey Telescope (LSST), SPHEREx, and the “Stage-4” ground-based cosmic microwave background experiment (CMB-S4). The value of the simulation derives from its tremendous volume (which is necessary to cover substantial portions of survey areas) and from attaining levels of mass and force resolution sufficient to capture the small structures that host faint galaxies.

The volume and resolution pose steep computational requirements, and because they are not easily met, few large-scale cosmological simulations are carried out. Contributing to the difficulty of their execution is the fact that the memory footprints of supercomputers have not advanced proportionally with processing speed in the years since Mira’s introduction. This makes that system, despite its relative age, rather optimal for a large-scale campaign when harnessed in full.

“A calculation of this scale is just a glimpse at what the exascale resources in development now will be capable of in 2021/22,” said Katherine Riley, ALCF Director of Science. “The research community will be taking advantage of this work for a very long time.”

Funding for the simulation is provided by DOE’s High Energy Physics program. Use of ALCF computing resources is supported by DOE’s Advanced Scientific Computing Research program.

Argonne National Laboratory seeks solutions to pressing national problems in science and technology. The nation's first national laboratory, Argonne conducts leading-edge basic and applied scientific research in virtually every scientific discipline. Argonne researchers work closely with researchers from hundreds of companies, universities, and federal, state and municipal agencies to help them solve their specific problems, advance America's scientific leadership and prepare the nation for a better future. With employees from more than 60 nations, Argonne is managed by UChicago Argonne, LLC for the U.S. Department of Energy's Office of Science.

The U.S. Department of Energy's Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science