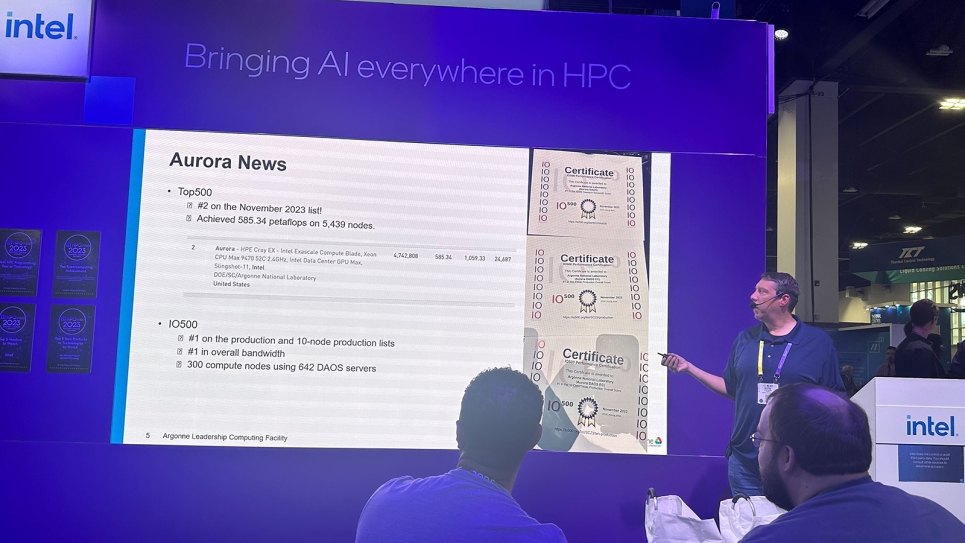

ALCF's Kevin Harms discusses Aurora during a tech talk at Intel's booth at the SC23 conference.

Argonne's Aurora exascale system registers early performance results at the SC23 conference.

The Aurora exascale computer is making good progress as it is being stood up at the U.S. Department of Energy’s (DOE) Argonne National Laboratory. Aurora demonstrated strong early performance numbers while still in the stabilization period.

Argonne submitted a partial result indicative of Aurora’s progress to the TOP500 rankings of the world’s most powerful supercomputers, achieving 585.34 petaflops on 5,439 nodes. Aurora’s storage system, DAOS, also earned the top spot on the IO500 production list, a semi-annual ranking of HPC storage performance.

“While our team continues to work to stabilize the full system for our scientific user community, we are very pleased to see how well the system performs at this point,” said Michael Papka, director of the Argonne Leadership Computing Facility (ALCF), a DOE Office of Science user facility. “The early performance results underscore Aurora’s immense potential for scientific computing.”

Built in partnership with Intel and Hewlett Packard Enterprise (HPE), Aurora is a massive machine with 166 racks and 10,624 nodes. The system is comprised of 21,248 Intel Xeon CPU Max Series processors and 63,744 Intel Data Center GPU Max Series processors.

The Aurora installation team, including staff from Argonne, Intel and HPE, continues to work on system validation, verification and scale-up activities to deploy the supercomputer to the user community in 2024. As is the case with DOE’s other leadership computing systems, Aurora is a first-of-its-kind supercomputer equipped with leading-edge technologies that are being deployed at an unprecedented scale.

“We’re in the process of addressing some of the challenges that are expected when bringing up a system of this scale,” said Susan Coghlan, ALCF project director for Aurora. “We’re working through hardware and software issues that only rear their heads when you start approaching full-scale operations.”

As one of the world’s largest supercomputers for open science, Aurora features more GPUs and more network endpoints in the interconnect technology than any system to date.

“One area of focus is optimizing the flow of data between the network endpoints via the system’s interconnect,” said Bill Allcock, ALCF director of operations. “That is what enables Aurora’s 10,000-plus nodes to communicate and coordinate tasks.”

Some application teams participating in DOE’s Exascale Computing Project (ECP) and the ALCF’s Aurora Early Science Program (ESP) have begun using Aurora to scale and optimize their applications for the system’s initial science campaigns. Their work has included performing scientifically meaningful calculations across a wide range of research areas. The system will soon be opened up to all early science teams.

“We’ve been working closely with ECP and ESP researchers for the past several years to get them ramped up for Aurora,” said, Kalyan Kumaran, ALCF director of technology. “Their preparatory work with the Aurora software development kit and our Sunspot system has helped ease the transition to running their codes on the full machine.”

Once their applications have been ported and configured for Aurora, the ECP and ESP teams will use the machine to carry out projects involving simulation, artificial intelligence, and data-intensive workloads to break new ground in areas ranging from fusion energy science and cosmology to cancer research and aircraft design. In addition to pursuing innovative research, these early users help stress test the supercomputer and identify where the system needs further tuning and optimization before deployment.

In 2024, an additional 24 research teams will begin using Aurora to ready their codes for the system via allocation awards from DOE’s Innovative and Novel Computational Impact on Theory and Experiment (INCITE) program.

“We can’t wait to get Aurora into production,” said Katherine Riley, ALCF director of science. “After all the hard work our team has put into standing up the system, we’re looking forward to seeing the incredible science it will enable.”