“Existing peptide drugs rank among our best medicines, but almost all of them have been discovered in nature. They’re not something we could design rationally—that is, until very recently,” said Vikram Mulligan, a biochemistry researcher at the Flatiron Institute.

Mulligan is the principal investigator on a project leveraging supercomputing resources at the Argonne Leadership Computing Facility (ALCF), a U.S. Department of Energy (DOE) Office of Science user facility located at DOE’s Argonne National Laboratory, with the aim of improving the production of peptide drugs. Peptides are chains of amino acids similar to those that form proteins, but shorter.

The original motivation for the research lies in Mulligan’s postdoctoral work at the University of Washington’s Baker Lab, in which he sought to apply what were determined to be accurate methods for designing proteins that could fold in specific ways.

“Proteins are the functional molecules in our cells, they’re the molecules responsible for all the interesting cellular activities that take place,” he explained. “It is their geometry that dictates those activities.”

Naturally occurring proteins—proteins produced by living cells—are built from just 20 amino acids. In the laboratory, however, chemists can synthesize molecules from thousands of different building blocks, allowing for innumerable structure combinations. This effectively means that a scientist might be able to manufacture, for example, enzymes capable of catalyses that no natural enzyme could perform.

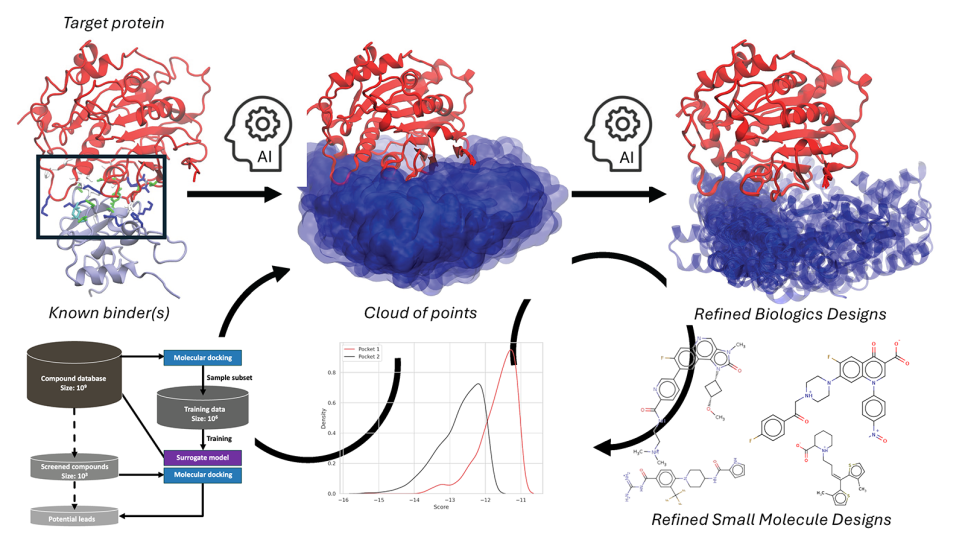

Mulligan is particularly interested in making small peptides that can act as drugs that bind to some target, either in the human body or in a pathogen, and that treat a given disease by altering the function of that target.

To this end, via a project supported through DOE’s INCITE program, Mulligan is using ALCF computational resources, including the Theta supercomputer, to advance the design of new peptide compounds with techniques including physics-based simulations and machine learning. His team’s work at the ALCF is driven by the Rosetta software suite, the applications and libraries of which enable protein and peptide structure prediction and design, RNA fold prediction, and more.

Between small-molecule drugs and protein drugs

Mulligan’s research aims to treat a broad spectrum of diseases, as evidence suggests that peptide-based compounds have the potential to operate as an especially versatile class of drugs.

While they are easily and effectively administered, a primary therapeutic limitation of small-molecule drugs, by contrast, is that they often display an equal affinity for other sites in a patient as they do for the intended target. This translates into being a source of side effects for the patient taking the drugs.

“Small-molecule drugs are like simple luggage keys in that they can unlock more than what they’re made for,” Mulligan said.

Larger protein drugs such as antibodies, meanwhile, have the advantage of acting on their targets with a high degree of specificity, on account of their (the drugs’) comparatively large surface area. Their disadvantages, however, include an inability to cross biological barriers (such as the gut-blood barrier or the blood-brain barrier) or to pass through cells, thereby limiting their targets to extracellular proteins. Given that immune systems have evolved to recognize foreign proteins and remove them from the body, protein drugs must evade these highly efficient mechanisms, an additional challenge for researchers.

Peptide drugs split the difference in terms of size, combining certain advantages of small-molecule drug with those of protein drugs so that they’re small enough to be permeable and evade the immune system, but large enough that they’re unlikely to bind to much aside from the intended targets.

To design such drugs, Mulligan’s work applies methods for protein design to what are called noncanonical design molecules, which comprise nonnatural building blocks and fold as proteins do, with the potential to bind to a target.

Physics-based simulations

While in the context of protein design, detailed structural information can be inferred from the 200,000 or so protein structures that have been solved experimentally, its determination is much more challenging when building peptides in the laboratory. At present, a mere two dozen peptide structures have been solved, several of which have been designed by Mulligan himself or by researchers applying his methods.

Because many more peptide structures must be solved before machine learning can guide the design of new peptide drugs, the team continues to rely on physics-based simulations for the time being and generates new strategies both for sampling conformations and the exploration of possible amino-acid sequences.

“With peptides as with proteins, the particular sequence of amino acids determines how the molecule folds up into a specific 3D structure, and this specific 3D structure determines the molecule’s function,” Mulligan said. “If you can get a molecule to rigidly fold into a precise shape, then you can create a compound that binds to that target. And if that target is, say, the active site of an enzyme, then you can inhibit that enzyme’s activity.”

That was the idea as conceived in 2012. “It took a long time to get it to work, but we’re now at the point where we can design, relatively robustly, folding peptides constructed from mixtures of natural and nonnatural amino-acid building blocks.”

A particular success among the handful of molecules that Mulligan’s team has designed binds to NDM-1, or New Dehli metallo-beta-lactamase 1, an enzyme responsible for antibiotic resistance in certain bacteria.

“The notion here was that if we could make a drug that inhibits the NDM-1 enzyme, this drug could be administered alongside conventional antibiotics, reviving the usefulness of those sidelined by resistance,” he said.

However, while this drug progressed from computer-aided design to laboratory manufacture, its ability to cross biological barriers must be fine-tuned in order to proceed through clinical trials.

Mulligan explained, “The next challenge is to try to make something that hits its target and also has all the desirable drug properties like good pharmacokinetics, good persistence in the body, and the ability to pass biological barriers or enter cells and get it wherever it needs to go.”

Furthermore, different biological barriers represent different degrees of difficulty when designing drugs. “The low-hanging fruit are targets in the gut, because those can be reached and acted on simply via oral medicine,” he said. “The most challenging cases are intracellular cancer targets, which necessitate that the drugs passively diffuse into cells—an ongoing problem in science.”

Approximating with proteins

The present physics-based methods for design include quantum chemistry calculations, which compute the energy of molecules with extreme precision by solving the Schrödinger equation, the central equation of quantum mechanics. Because such solutions have high computational costs that grow exponentially as the objects of study increase in size and complexity, they are historically obtained for only the smallest molecular systems. To try to minimize these costs, the research team employs an approximation known as a force field.

“We pretend that the atoms in our system are small spheres exerting forces on each other,” Mulligan explained, “a classical approximation that reduces our accuracy but gives us a lot of speed and makes tractable a lot of otherwise intractable equations.”

The accuracy of the method diminishes the less the peptide building-blocks resemble conventional amino acids: building blocks that bear strong similarities to conventional amino acids permit the use of force-field approximations generated by training machine learning applications on protein structures, but their applicability is comparatively tenuous if the building blocks have exotic features or contain certain chemical elements.

As such, one goal of the research team is to incorporate quantum chemistry calculations into the design and validation pipelines while minimizing to the greatest possible extent the tradeoffs between accuracy and precision inherent to approximations.

Benchmarking and validation

Incorporation of the calculations requires benchmarking and testing so as to determine the appropriate level of theory and which approximations are appropriate.

“There are all sorts of approximations that quantum chemists have generated to try to scale quantum chemistry methods,” Mulligan said. “Many of them are quite good—are established practices within the quantum chemistry community—but have yet to take root in the molecular-modeling community. With something like the force-field approximation, we need to ask the questions, how do we use it efficiently for molecular-modeling tasks? Under what circumstances is it good enough to use the force field? Under what circumstances do we want to use this approximation and under what circumstances is this approximation not good enough? Would we have to employ a higher level of quantum chemistry theory in order to complete the necessary benchmarking? To perform all the concomitant trial and error, we need powerful computational resources, which is where leadership-class systems are especially important. To this end, many of our calculations are fairly parallelizable.”

Validation strategies involve taking a designed peptide with a certain amino-acid sequence and altering the sequence to create all sorts of alternative conformations to identify those that result in the desired fold.

Such approaches to conformational sampling, in which countless molecular arrangements are explored, are similarly parallelizable, enabling the researchers to carry out the conformation analyses across thousands of nodes on the ALCF’s Theta supercomputer. These brute-force calculations lay the foundation for data repositories that the team will use to train machine-learning models.

“Part of the challenge of machine learning is figuring out how to use it well,” Mulligan said. “Because a machine-learning model is going to make mistakes regardless of how it’s programmed, I tried to train this one to generate more false positives than false negatives—to identify more things as peptides that fold than it should. It’s easier to sift through extra hay, if you will, further down the line in our search for a needle.”

Successful validation of peptide designs using quantum chemistry calculations itself represents a significant advance. Moreover, in the aforementioned NDM-1 example (that concerning the mitigation of antibiotic resistance among bacteria), all the design and validation work was completed using the force-field approximations.

Adapting to exascale

Ongoing and future work requires substantial revision of the Rosetta software suite for next-generation optimization, including accelerator-based computing systems such as the ALCF’s Polaris and Aurora supercomputers.

“Rosetta started its life in the late 1990s as a protein modeling package written in FORTRAN, and it's been subsequently rewritten several times,” Mulligan said. “While it’s written in modern C++, it is beginning to show its age; even the latest version was written more than 10 years ago. We’ve continually refactored the code to try to make it more general, try to make it work for nonnatural amino acids, but taking advantage of modern hardware has posed challenges.” While the software parallelizes on central processing units (CPUs) and scales well, graphics processing units (GPUs) are not supported to their full capability.

“Because Polaris is a hybrid CPU-GPU system, as the exascale Aurora system will be, I and others are working on rewriting Rosetta's core functionality from scratch. By creating a successor to the current software, it's my hope that we can continue to use these software methods efficiently on new hardware for years to come, and that we can build atop them to permit more challenging molecular design tasks to be tackled."

==========

The Argonne Leadership Computing Facility provides supercomputing capabilities to the scientific and engineering community to advance fundamental discovery and understanding in a broad range of disciplines. Supported by the U.S. Department of Energy’s (DOE’s) Office of Science, Advanced Scientific Computing Research (ASCR) program, the ALCF is one of two DOE Leadership Computing Facilities in the nation dedicated to open science.

Argonne National Laboratory seeks solutions to pressing national problems in science and technology. The nation's first national laboratory, Argonne conducts leading-edge basic and applied scientific research in virtually every scientific discipline. Argonne researchers work closely with researchers from hundreds of companies, universities, and federal, state and municipal agencies to help them solve their specific problems, advance America's scientific leadership and prepare the nation for a better future. With employees from more than 60 nations, Argonne is managed by UChicago Argonne, LLC for the U.S. Department of Energy's Office of Science.

The U.S. Department of Energy's Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science