The U.S. Department of Energy’s (DOE) Argonne National Laboratory will be home to one of the nation’s first exascale supercomputers when Aurora is deployed. To prepare codes for the architecture and scale of the system, 15 research teams are taking part in the Aurora Early Science Program (ESP) through the Argonne Leadership Computing Facility (ALCF), a DOE Office of Science user facility. With access to pre-production time on the supercomputer, these researchers will be among the first in the world to use an exascale machine for science. This is one of their stories.

Humans have poked and prodded the brain for millennia to understand its anatomy and function. But even after untold advances in our understanding of the brain, many questions still remain.

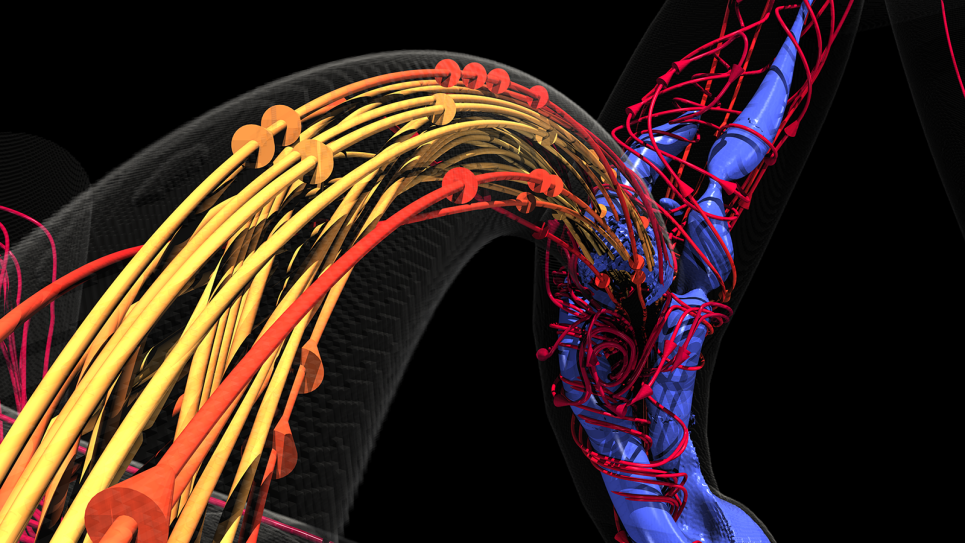

Using far more advanced imaging techniques than those of their earlier contemporaries, researchers at the DOE’s Argonne National Laboratory are working to develop a brain connectome — an accurate map that lays out every connection between every neuron and the precise location of the associated dendrites, axons, and synapses that help form the communications or signaling pathways of a brain.

Such a map will allow researchers to answer questions like, how is brain structure affected by learning or degenerative diseases, and how does the brain age?

Led by Argonne senior computer scientist Nicola Ferrier, the project, “Enabling Connectomics at Exascale to Facilitate Discoveries in Neuroscience,” is a wide-ranging collaboration between computer scientists and neuroscientists, and academic and corporate research institutions, including Google and the Kasthuri Lab at the University of Chicago.

It is among a select group of projects supported by the ALCF’s Aurora Early Science Program (ESP) working to prepare codes for the architecture and scale of its forthcoming exascale supercomputer, Aurora.

And it is the kind of research that was all but impossible until the advancement of ultra-high-resolution imaging techniques and more powerful supercomputing resources. These technologies allow for finer resolution of microscopic anatomy and the ability to wrangle the sheer size of the data, respectively.

Only the computing power of an Aurora, an exascale machine capable of performing a billion billion calculations per second, will meet the near-term challenges in brain mapping.

Currently without that power, Ferrier and her team are working on smaller brain samples, some of them only one cubic millimeter. Even this small mass of neurological matter can generate a petabyte of data, equivalent to, it is estimated, about one-twentieth the information stored in the Library of Congress.

And with the goal of one day mapping a whole mouse brain, about a centimeter cubed, the amount of data would increase by a thousandfold at a reasonable resolution, noted Ferrier.

“If we don’t improve today’s technology, the compute time for a whole mouse brain would be something like 1,000,000 days of work on current supercomputers,” she said. “Using all of Aurora, if everything worked beautifully, it could still take 1,000 days.”

“So, the problem of reconstructing a brain connectome requires exascale resources and beyond,” she added.

Working primarily with mouse brain samples, Ferrier’s ESP team is developing a computational pipeline to analyze the data obtained from a complicated process of staining, slicing, and imaging.

The process begins with samples of brain tissue which are stained with heavy metals to provide visual contrast and then sliced extremely thin with a precision cutting tool called an ultramicrotome. These slices are mounted for imaging with Argonne’s massive-data-producing electron microscope, generating a collection of smaller images, or tiles.

“The resulting tiles have to be digitally reassembled, or stitched together, to reconstruct the slice. And each of those slices have to be stacked and aligned properly to reproduce the 3D volume. At this point, neurons are traced through the 3D volume by a process known as segmentation to identify neuron shape and synaptic connectivity,” explained Ferrier.

This segmentation step relies on an artificial intelligence technique called a convolutional neural network; in this case, a type of network developed by Google for the reconstruction of neural circuits from electron microscopy images of the brain. While it has demonstrated better performance than past approaches, the technique also comes with a high computational cost when applied to large volumes.

“With the larger samples expected in the next decade, such as the mouse brain, it’s essential that we prepare all of the computing tasks for the Aurora architecture and are able to scale them efficiently on its many nodes. This is a key part of the work that we’re undertaking in the ESP project,” said Tom Uram, an ALCF computer scientist working with Ferrier.

The team has already scaled parts of this process to thousands of nodes on ALCF’s Theta supercomputer.

“Using supercomputers for this work demands efficiency at every scale, from distributing large datasets across the compute nodes, to running algorithms on the individual nodes with high-bandwidth communication, to writing the final results to the parallel file system,” said Ferrier.

At that point, she added, large-scale analysis of the results truly starts to probe questions about what emerges from the neurons and their connectivity.

Ferrier also believes that her team’s preparations for exascale will serve as a benefit to other exascale system users. For example, the algorithms they are developing for their electron microscopy data will find application with X-ray data, especially with the upcoming upgrade to Argonne’s Advanced Photon Source (APS), a DOE Office of Science User Facility.

“We have been evaluating these algorithms on X-rays and have seen early success. And the APS Upgrade will allow us to see finer structure,” notes Ferrier. “So, I foresee that some of the methods that we have developed will be useful beyond just this specific project.”

With the right tools in place, and exascale computing at hand, the development and analysis of large-scale, precision connectomes will help researchers fill the gaps in some age-old questions.

==========

The Argonne Leadership Computing Facility provides supercomputing capabilities to the scientific and engineering community to advance fundamental discovery and understanding in a broad range of disciplines. Supported by the U.S. Department of Energy’s (DOE’s) Office of Science, Advanced Scientific Computing Research (ASCR) program, the ALCF is one of two DOE Leadership Computing Facilities in the nation dedicated to open science.

Argonne National Laboratory seeks solutions to pressing national problems in science and technology. The nation’s first national laboratory, Argonne conducts leading-edge basic and applied scientific research in virtually every scientific discipline. Argonne researchers work closely with researchers from hundreds of companies, universities, and federal, state and municipal agencies to help them solve their specific problems, advance America’s scientific leadership and prepare the nation for a better future. With employees from more than 60 nations, Argonne is managed by UChicago Argonne, LLC for the U.S. Department of Energy’s Office of Science.

The U.S. Department of Energy’s Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science.