Being able to create accurate weather models for weather forecasting is essential for every aspect of the American economy, from aviation to shipping. To date, weather models have been primarily based on equations related to thermodynamics and fluid dynamics in the atmosphere. These models are tremendously computationally expensive and are typically run on large supercomputers.

Researchers from private sector companies like Nvidia and Google have started developing large artificial intelligence (AI) models, known as foundation models, for weather forecasting. Recently, scientists at the U.S. Department of Energy’s (DOE) Argonne National Laboratory, in close collaboration with researchers Aditya Grover and Tung Nguyen at the University of California, Los Angeles, have begun to investigate this alternative type of model. This model could produce in some cases even more accurate forecasts than the existing numerical weather prediction models at a fraction of the computational cost.

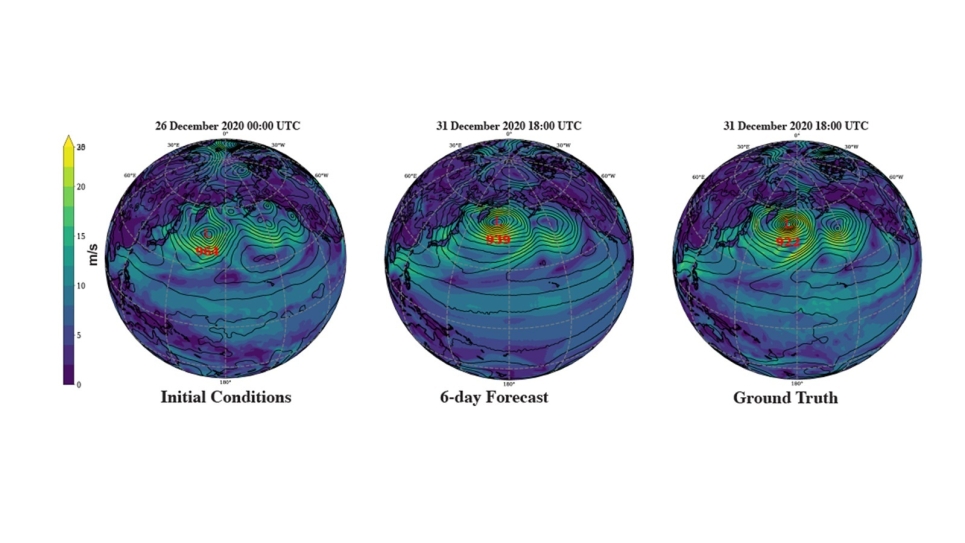

Some of these models outperform current models’ prediction capability beyond seven days, giving scientists an additional window into the weather.

Foundation models are built on the use of “tokens,” which are small bits of information that an AI algorithm uses to learn the physics that drives the weather. Many foundation models are used for natural language processing, which means handling words and phrases. For these large language models, these tokens are words or bits of language that the model predicts in sequence. For this new weather prediction model, the tokens are instead pictures — patches of charts depicting things like humidity, temperature and wind speed at various levels of the atmosphere.

“Instead of being interested in a text sequence, you’re looking at spatial-temporal data, which is represented in images,” said Argonne computer scientist Sandeep Madireddy. “When using these patches of images in the model, you have some notion of their relative positions and how they interact because of how they’re tokenized.”

The scientific team can use quite low-resolution data and still come up with accurate predictions, said Argonne atmospheric scientist Rao Kotamarthi. “The philosophy of weather forecasting has for years been to get to higher resolutions for better forecasts. This is because you are able to resolve the physics more precisely, but of course this comes at great computational cost,” he said. “But we’re finding now that we’re actually able to get comparable results to existing high-resolution models even at coarse resolution with the method we are using.”

While reliable near-term weather forecasting seems to be a near-term achievable goal with AI, trying to use the same approach for climate modeling, which involves analyzing weather over time, presents an additional challenge. “In theory, foundation models could also be used for climate modeling. However, there are more incentives for the private sector to pursue new approaches for weather forecasting than there are for climate modeling,” Kotamarthi said. “Work on foundation models for climate modeling will likely continue to be the purview of the national labs and universities dedicated to pursuing solutions in the general public interest.”

One reason climate modeling is so difficult is that the climate is changing in real time, said Argonne environmental scientist Troy Arcomano. “With the climate, we’ve gone from what had been a largely stationary state to a non-stationary state. This means that all of our statistics of the climate are changing with time due to the additional carbon in the atmosphere. That carbon is also changing the Earth’s energy budget,” he said. “It’s complicated to figure out numerically and we’re still looking for ways to use AI.”

The introduction of Argonne’s new exascale supercomputer, Aurora, will help researchers train a very large AI-based model that will work at very high resolutions. “We need an exascale machine to really be able to capture a fine-grained model with AI,” Kotamarthi said.

The research was funded by Argonne’s Leadership-Directed Research and Development Program and the model was run on Polaris, a supercomputer at the Argonne Leadership Computing Facility, a DOE Office of Science user facility.

A paper based on the study received the Best Paper Award at the workshop “Tackling Climate Change with Machine Learning.” The workshop was held on May 10 in Vienna, Austria, in conjunction with the International Conference on Learning Representation 2024.